Memetic Surveillance of Black Women

Black women are more likely to be the targets of on social media platforms.1 The anti-black and gendered racism prevalent on these networks deeply impacts the lives of Black women -- and perhaps nowhere are these ideas more evident than in anti-Black .

Memes are ideas that spread through culture. Specifically, internet memes are repurposed images, videos, text, or other forms of media that convey meaning, incite humor, and build community.2 Though they may be created by a specific person, viral memes become authorless. Unmoored “from the trappings of an author’s reputation or intention, [memes] become the collective property of the culture,” as Dr. Joan Donovan put it in the MIT Technology Review. This collective property can also serve as a surveillance mechanism.

Memetic Surveillance

This introduces the concept of memetic surveillance, the act of utilizing images of historically marginalized groups without consent, both on and offline, to perpetuate symbolic violence,3 a concept introduced by Pierre Bourdieu to describe attacks that seek to delegitimize a person based on preconceived notions. 4

Memetics can become a tool that reinforces white dominance to police and surveil Black women — serving as a human, social and physical privacy vulnerability both on and offline.

Memetic surveillance has two forms: Surveillance and Ambient Surveillance.

Individual Surveillance is the nonconsensual use of a person's image in a meme. Images can be taken from social media accounts, stock image galleries, or real-life interactions where someone snaps a picture of someone else.

- Memes that use authentic images of people carry identifying information which can result in privacy risks to the individual and enable doxing, harassment, or stalking.

Ambient Surveillance is when a group or individual watches the online expressions and behavioral patterns of a particular identity group, such as Black women, and attempts to imitate, stereotype, or trivialize it to turn them into meme fodder. Ambient Surveillance can result from so-called "cringe watching" or "hate watching," wherein people observe groups of people they don't like in order to mock them.

- Ambient surveillance results in the proliferation of stereotypes, racism, sexism, classism or other forms of hate in an attempt to impersonate historically marginalized individuals.

Historical Patterns of Surveillance

In 2002, Patricia Hill Collins coined the concept "matrix of domination."5 It describes four interrelated domains of how power is constructed in our society. Hill Collins suggests that power is molded through structural, disciplinary, hegemonic, and interpersonal lenses. Her matrix highlights experiences of oppression and the complex social relations and processes that produce these experiences. The experience of Black women online is one rife with oppression and inequity, which makes the internet itself less safe and accessible to Black women. This digital divide on social networks calls for intersectional digital justice. Dr. Christopher Gilliard has argued that achieving equity online doesn't just mean evening out who has access to the internet but also equalizing what kind of access they have so that one group is not more at risk for harm when they go online.6

Racialized and racist material used as humor based on the denigration of people7 has a longstanding foundation in social participatory discourse and engagement. While memes can be humorous – depending on the individual or context – that same humor can reduce individuals or subgroups into a cycle of objectification, racism, sexism, hyper-sexualization, commodification, and harm.

As Brandi Collins-Dexter wrote in her "Butterfly Attack: Operation Blaxit," people who engage in – the act of impersonating Black people online by non-Black people – often closely observe Black communities online to impersonate them. They adopt false identities to share ideas in Black spaces and to take up space.

The design of social media makes it easy to engage in surveillance, defined by research scientist Kurt Thomas as the leveraging of privileged access to a person’s online information to monitor the target’s activities, location, or communication.8 “Privileged” generally means that the information accessed is not open to the general public. One could argue that tweets or posts made online are public and therefore are fair game for meme-making. However, when someone uploads images online in a certain context that doesn't mean they have given their consent for those images to be used in other contexts, even if it may be legal to do so. Some people may not realize they are sharing content publicly online, for example, given that different social media platforms have different privacy defaults. A 2017 study about users’ understanding of privacy on the platform found that 33 percent of the 449 people surveyed did not realize their tweets were public by default and visible to anyone. Furthermore, some memes are created by taking photos of people in real life without their knowledge. In this case, though these people were in public when photographed, the act of taking their image and putting it on social media where it can spread at scale through the culture becomes a sort of surveillance.

Examples of Memetic Surveillance

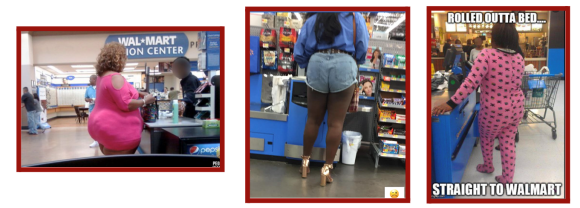

Photos of people shopping at Wal-Mart are a popular format for online image macro memes.9 A subcategory of this “People of Walmart” meme are memes specifically focusing on Black women shopping at Wal-Mart or similar stores. These images are snapped by strangers without the knowledge or consent of the women in the photos. They are then uploaded to the internet with or without text, where they can be iterated on by other internet users, who turn them into new memes. All this process serves to reinforce underlying patterns of social domination.10

These memes pose a privacy invasion in memetic form. Black women in these images can be identified, harassed, stalked or worse based on these memes. They also highlight how memes can inflict symbolic violence. In these memes, outsiders who perceive themselves to be dominant socially use the size, race, and perceived class status of the women in the photos as evidence that they are a joke to be memed.

Memetic surveillance shows a modern-day example of historical racist practices such as “human zoos” like the German Völkerschauen, wherein Black people were presented as curios for a white European public from 1874 to 1930.11

- 1Brandeis Marshall, “Algorithmic misogynoir in content moderation practice” Heinrich Böll Stiftung (2021).

- 2A.M. Guilmette “Review of the psychology of humor: an integrative approach” Canadian Psychology 49(3): 267–268. (2008)

- 3“symbolic violence,” Oxford Reference, accessed June 27, 2022, https://www.oxfordreference.com/view/10.1093/oi/authority.2011080310054…

- 4 “Symbolic Violence,” Science Direct , accessed June 27, 2022 https://www.sciencedirect.com/topics/social-sciences/symbolic-violence

- 5Patricia Hill Collins “ Black Feminist Thought : Knowledge, Consciousness, and the Politics of Empowerment” (Routledge, 1990)

- 6Chris Gilliard “Digital Redlining, access, and privacy,” Common Sense Education (November 6 2019), https://www.commonsense.org/education/articles/digital-redlining-access…

- 7 “Jim Crow Museum: Home,” Ferris State University, accessed on June 27 2022,. https://www.ferris.edu/jimcrow/

- 8K. Thomas, et al., "SoK: Hate, Harassment, and the Changing Landscape of Online Abuse," 2021 IEEE Symposium on Security and Privacy (SP) (2021): pp. 247-267, doi: 10.1109/SP40001.2021.00028

- 9“People of Walmart,” Know Your Meme, accessed on June 27, 2022 https://knowyourmeme.com/memes/sites/people-of-walmart

- 10Patricia Hill Collins, "Black Feminist Thought : Knowledge, Consciousness, and the Politics of Empowerment" (Routledge, 1990).

- 11Anne Dreesbach, “Colonial Exhibitions, 'Völkerschauen' and the Display of the 'Other'”, European History Online (EGO) (Leibniz Institute of European History: May 3 2012), http://www.ieg-ego.eu/dreesbacha-2012-en

Anti-Blackness and surveillance are deeply interconnected with colonialism and imperialism, which fetishizes and hypersexualizes the populations being dominated. Black women are a particularly common target of the anti-Black communities whose currency is memes.

Social networking applications become a space of surveillance and punishment for feminist activism and populist right-wing content promoting authoritarian and minority-threatening ideologies.1 For example, ’s /Politically Incorrect/ board, famous for its far-right content, including white supremacist and mysoginistic ideas, is a hotbed of anti-Black memes about Black women. In online forums like these, populist right-wing content promoting authoritarian and minority-threatening ideologies is commonly shared – sometimes with the direct intent to upset or provoke the targeted identity group.2

Entire communities on have existed in order to discuss, analyze, fetishize, and demean Black people, particularly Black women. In 2015, Reddit finally banned the worst of these, with unsubtle names like r/CoonTown and r/chimpire.3 Other, lesser known communities such as the website Niggermania still exist as spaces for racists to share and find anti-Black memes.

But the harms against Black women caused by these memes are not confined to these alternative tech spaces. Mainstream social media is filled with this kind of content as well. A 2017 study conducted by Amnesty International and Element AI concluded that on Twitter, Black women were by far more likely to experience harassment and hate speech than other identity groups. In an examination of 288,000 tweets sent to 778 female-identified politicians and journalists in the U.S and U.K. on the Twitter platform, the researchers found Black women were 84% more likely than white women to be mentioned in abusive or problematic tweets that amplify sexual and physical threats, misogyny, and racial slurs. This study highlights that one in ten tweets mentioning Black women were abusive or problematic, compared to one in 15 for White women. The targeting of Black women as an act of surveillance leads to attacks, bullying, defamation, harm, hate, , racism, stalking, and threats.4

The Twitter account @DeludedShaniqua was a prime example of ambient memetic surveillance until Twitter suspended it 2021.5 The account was a pseudonymous impersonation of a Black woman, which posted text and images aimed to perform racist perceptions of Black women.

The page served as a user-submitted repository of memes and GIFs targeting Black women. These images, tropes, and stereotypes were collected via retweet or direct posting in order to create a database of “entertainment” fuelled by anti-Black memes that were fatphobic, colorist, racist, and sexist.

Technological Affordances to Enable and Enhance Memetic Surveillance

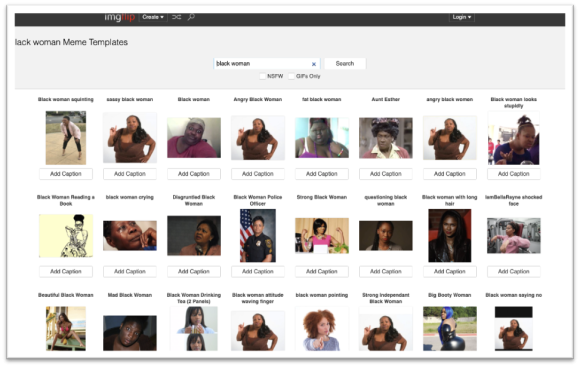

Memes about Black women are normalized online. This is particularly true with services called meme generators. Meme generators generate memes and captions for users, sometimes employing predictive text and suggestions based on what is trending and what people have searched for in the past. These generators offer users the option to create memes with categorized templates built on pre-existing racist stereotypes – for example, “Angry Black Woman” or “Disgruntled Black Woman.”

Imgflip, a web-based tool that allows people to create and share images, houses approximately 100M public meme captions created by users.Imgflip has an algorithmic feature called “This Meme Does Not Exist,” which generates memes and caption through an artificial neural network that uses character-level prediction so that prefix text is specified to influence the text that is generated. This algorithm trained on public user-submitted images and templates, which means any bias or cultural input submitted by human creators was replicated in the algorithm. This tool can pose a large-scale privacy risk for anyone whose picture or information is part of these templates.

- 1Brandeis Marshall, “Algorithmic misogynoir in content moderation practice” Heinrich Böll Stiftung (2021).

- 2Ibid.

- 3Alex Hern, “How Reddit took on its own users – and won,” The Guardian , December 30 2015, https://www.theguardian.com/technology/2015/dec/30/reddit-ellen-pao

- 4K. Thomas, et al., "SoK: Hate, Harassment, and the Changing Landscape of Online Abuse," 2021 IEEE Symposium on Security and Privacy (SP) (2021): pp. 247-267, doi: 10.1109/SP40001.2021.00028

- 5Shaniqua Posting Delusions (@DeIudedShaniqwa), Twitter, archived on https://web.archive.org/web/20220109171652/https://twitter.com/DeIudedS…

When searching the terms, “Black Woman'' and “Black Women,'' the platform leads to a sea of memetic templates of Black women with thousands of captions. Categorical tags center on largely negative, stereotypical behavioral patterns, racist tropes, fatphobia, colorism, hypersexuality, ineducability, and misogynoir. A few or the hundreds of templates about Black women that Imgflip offers have names like: “Sassy Black Woman”, “Angry Black Woman”, “Black Woman Looks Stupidly”, “Black Woman Reading A Book”, “Black Woman Crying”, “Disgruntled Black Woman,” “White Girl Strangles Black Woman,” “Contemplative Black Woman,” “White Girl Wants To Choke Black Woman With Her Legs,” “Black Woman Ignoring Black Man,” “Black Woman Drive Through,'' and “Armed Black Woman.''

The meme templates that Imgflip serves up based on these phrases are of real people – sometimes stock images, other times photos with unclear provenance. Searching the terms “White Women” and “White Woman” mostly surfaces images of comedians, actors, and infamous white women, like the “Karen” meme.

AI-generated memes, as evidenced on web-based tools like Imgflip, have been seen in theory as convenient, innovative, and novel. But they also lead to the proliferation of images of Black women photographed without their consent, opening women up to symbolic violence and privacy invasions. Whether it’s through Imgflip or another platform, individuals have unrestricted access to images and templates that hold faces of individuals unknown to us. And they contain data that can incriminate or endanger individuals due to memetic data expressing racist tropes, sexism, classism, misogyny, and misogynoir.

Discussion

Societal perceptions of Black women shape how memes about them are constructed and repurposed. By perpetuating racist tropes, sexism, colorism, classism, misogyny, and misogynoir, these memes become controlling images that spread and reward marginalizing ideologies. The virality of racist memes provokes reactions that fuel people to continue "hate watching" and "cringe watching" communities to mine their behavior patterns in order to develop stereotyped content for memes.

Even when the memes are not outright derogatory the humor and format of memes that rely on stereotypes reduce Black women into a cycle of objectification, hyper-sexualization, commodification, and harm. Memetic surveillance can threaten a person's autonomy and control.

Many people who appear in memes may not understand the potential risk to their privacy the meme represents. The more historically marginalized an internet user is, the more magnified privacy vulnerabilities become due to community-specific patterns around technology use and knowledge gaps about privacy and security-protective tools. [vi] Literacy about memetic surveillance is essential to combat these risks.

Further areas of memetics that bear research into surveillance: the use of meme surveys and questionnaires on social media that harvest user data; memes as a means of ; policies and regulations that could mitigate the harms caused by surveillent memes.

The author would like to thank Professor Chris Gilliard, Brian Friedberg, and Professor Brooklyne Gipson for their input and collaboration.